Most People Are Using LLMs Incorrectly

There’s a common pattern: someone opens a chat interface, types a question like they would into Google, and expects a precise answer.

That approach breaks down quickly.

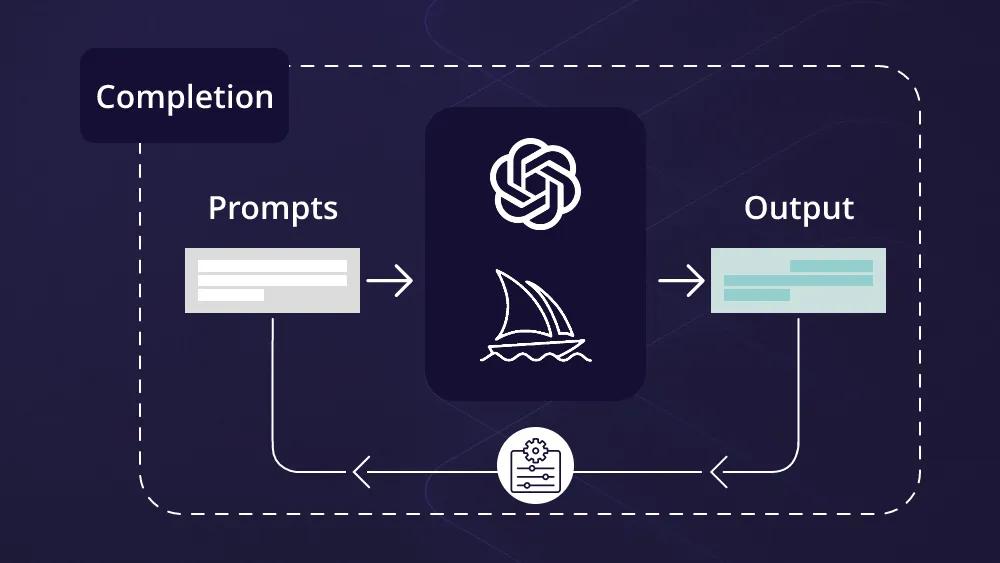

Large Language Models aren’t databases. They don’t retrieve—they construct. What you get depends heavily on how you shape the interaction.

If you want consistent, high-quality output, you have to treat prompting less like asking and more like configuring a system.

1. Start With a System-Level Instruction

Jumping straight into a question is where most prompts fail.

Instead, define the model’s role and boundaries upfront:

- “You are a senior backend architect…”

- “You are a legal analyst specializing in contract risk…”

This does more than set tone—it narrows the reasoning space. You’re effectively choosing the lens through which the model processes the problem.

2. Replace Vague Language With Hard Constraints

Words like “good,” “fast,” “creative,” or “detailed” are open to interpretation.

Constraints are not.

Better prompts include:

- Word limits

- Technology boundaries

- Output formats

For example:

- “Limit the response to 150 words.”

- “Use only native Python libraries.”

Precision here directly reduces ambiguity in output.

3. Force Structured Reasoning

One of the simplest ways to improve output quality is to slow the model down.

Instead of asking for an answer, ask for:

- A step-by-step reasoning process

- A breakdown before conclusions

This reduces logical jumps and improves reliability—especially for technical or multi-step problems.

4. Use Examples to Shape Output (Few-Shot Prompting)

If you want a specific format or tone, don’t describe it—demonstrate it.

Provide a few short examples before your actual request. This gives the model a pattern to replicate.

Without examples, you get a generic answer.

With examples, you get alignment.

5. Define What the Model Should Avoid

Most prompts focus only on what to include. That’s only half the equation.

Explicitly removing unwanted patterns improves quality:

- Avoid buzzwords

- Avoid passive voice

- Avoid generic disclaimers

This technique helps strip out the “AI tone” that often weakens otherwise solid content.

6. Treat Output Like a First Draft

The first response is rarely the best one.

Instead of rewriting manually, ask the model to critique itself:

- “Identify logical gaps in your answer.”

- “Where could this fail in production?”

LLMs are surprisingly effective at analyzing their own outputs when prompted correctly.

7. Use Reusable Prompt Structures

Well-structured prompts behave like functions.

Instead of rewriting instructions every time, create reusable templates:

[INPUT_TEXT][TARGET_AUDIENCE][OUTPUT_FORMAT]

This keeps your logic consistent and reduces errors across repeated tasks.

8. Prime With Context Before Asking

The model doesn’t have access to your internal systems, documents, or assumptions.

If the task depends on specific context:

- Provide relevant data

- Include code snippets

- Share constraints upfront

The more grounded the context, the more reliable the output.

9. Control Output Style Through Language

Even without direct parameter controls, you can influence output style through instruction.

For example:

- “Provide a conservative, fact-based analysis.”

- “Explore unconventional, high-risk solutions.”

These cues shape how the model approaches the problem—either narrowing or expanding its reasoning.

10. Stop Treating the Model Like a Person

This is where most inefficiencies come from.

The model:

- Doesn’t need politeness

- Doesn’t benefit from vague phrasing

- Doesn’t improve with conversational fluff

Clear, direct, and structured inputs consistently outperform casual requests.

Why This Matters for Teams and Businesses

Prompt quality isn’t just a personal productivity skill—it directly impacts:

- Engineering efficiency

- Content quality

- Decision support systems

- Automation reliability

Teams that standardize how they interact with LLMs see:

- More consistent outputs

- Less rework

- Faster iteration cycles

Final Takeaway

Better prompts don’t come from writing more—they come from thinking more precisely.

Once you stop treating an LLM like a search engine and start treating it like a configurable system, the quality of output changes immediately.

And more importantly, it becomes predictable.